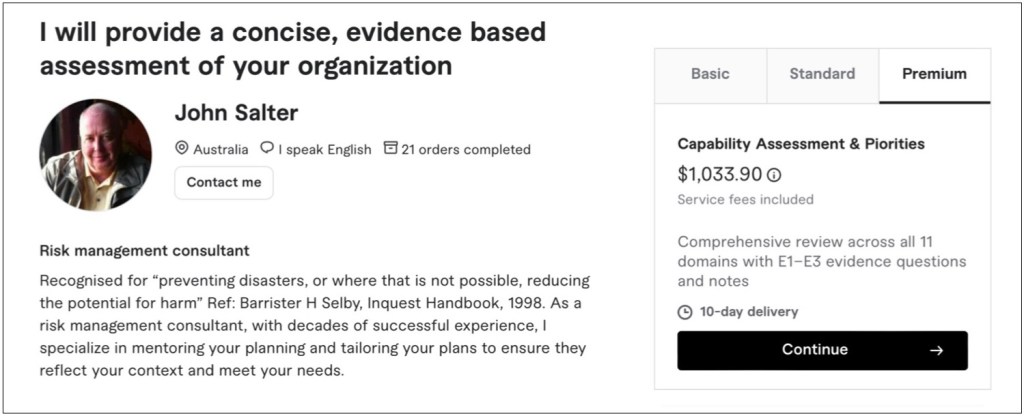

Purpose

This assessment provides a concise, evidence‑based view of how well the organisation is set up to run, grow, and handle shocks, not just how much documentation it has. It combines a practical business lens with proven public‑sector and private‑sector capability models.

Framework structure

The method assesses capability across 11 domains that together describe how the business works:

- Context, Scope, Stakeholders & Strategy

- Leadership, Governance, Culture & Accountability

- Integrated Risk & Opportunity Management

- Framework, Design & Integration into Operations

- Planning, Objectives, Strategies & Change

- People, Capability, Culture, Communication & Awareness

- Customers, Markets, Stakeholders & Supply Chain

- Operational Control, Design, BCM Plans & Emergency Response

- Information, Data, Documentation & Digital

- Performance Measurement, Monitoring, Exercising & Review

- Learning, Improvement, Innovation & Resilience Evolution

Each domain is assessed using evidence questions tailored to the organisation’s size, context, and sector.

Three evidence tests (E1–E3)

For each domain we look for three levels of evidence:

- E1 – Exists

Do the core structures and processes exist?

(Policies, frameworks, processes, roles, plans, registers, documented approaches.) - E2 – Enabled

Are they supported and usable?

(Clear owners, resources, training, tools, data, and regular review cycles.) - E3 – Executed

Are they actually used and making a difference?

(Real examples where they shape decisions, behaviours, investments, and outcomes.)

In practical terms, E1/E2 tell you “have we built the system?” and E3 asks “does the system change what happens?”

Maturity scale (N–P–L–F)

Evidence across E1–E3 is then converted into a four‑step maturity rating for each domain:

- N – Absent

No meaningful evidence; capability does not meet current needs. - P – Ad hoc

Informal, person‑dependent, inconsistent; pockets of good practice but not reliable. - L – Defined

Documented and repeatable, but weakly enforced; often strong on design, weaker on routine use. - F – Operational

Embedded, consistent, tested, and reviewed; good evidence that it works in practice.

The assessment also considers how well each domain is positioned for future challenges, using concepts like Emerging, Developing, Embedded, and Leading as narrative descriptors, but N/P/L/F remains the formal rating scale.

Outputs

A typical assessment delivers:

- Overall maturity position (e.g. percentage at Defined vs Operational).

- Domain‑by‑domain ratings and commentary, highlighting strengths, gaps, and underlying evidence.

- A simple heat‑map or scorecard showing current and target maturity across the 11 domains.

- A short list of priority gaps and practical actions, sequenced so effort is focused where it matters most.