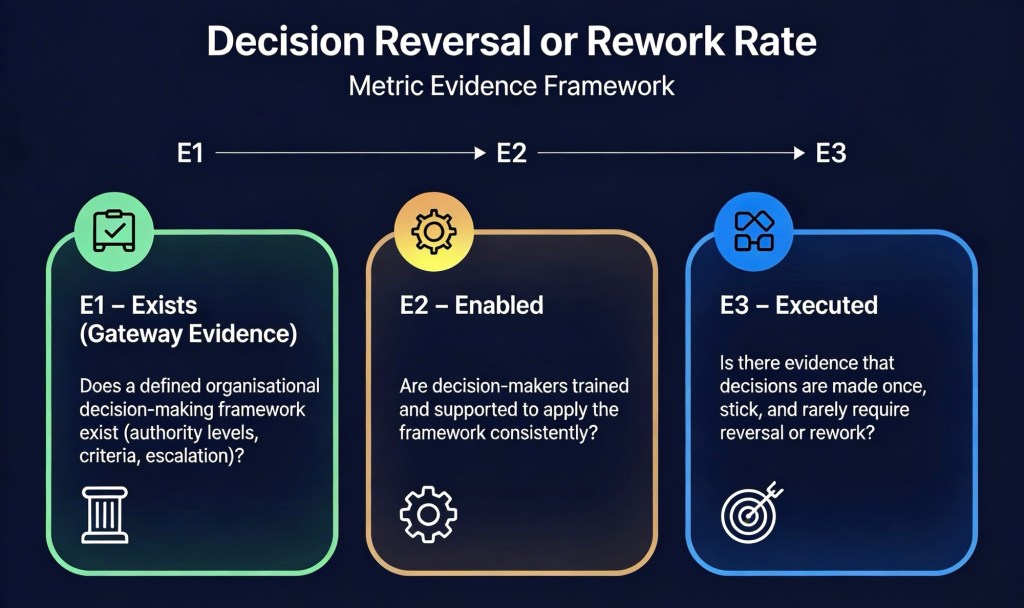

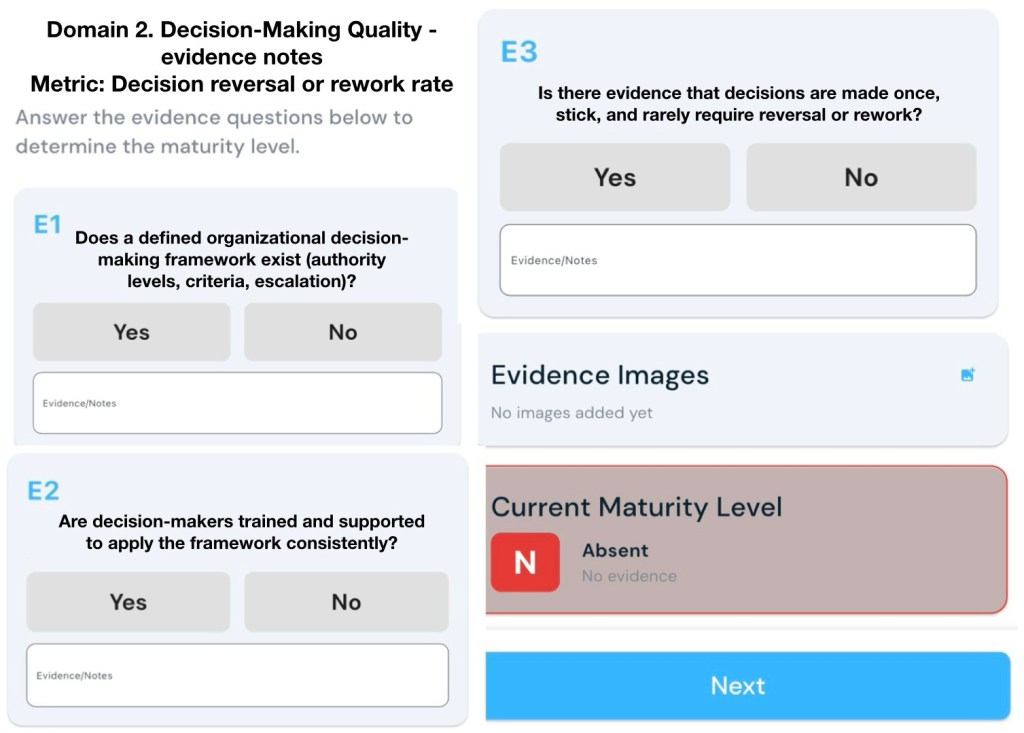

Metric: Decision reversal or rework rate

E1 – Exists (Gateway Evidence)

Does a defined organisational decision-making framework exist (authority levels, criteria, escalation)?

E2 – Enabled

Are decision-makers trained and supported to apply the framework consistently?

E3 – Executed

Is there evidence that decisions are made once, stick, and rarely require reversal or rework?

Support assessment with three types of material: artefacts that show the framework exists (E1), evidence that people are enabled to use it (E2), and data/records that show stable, low‑rework decisions (E3).

Below are examples and notes you could look for under each level.

E1 – Exists (defined framework)

Look for formal, current documents that clearly describe how decisions are supposed to be made, who can make them, and when to escalate.

Possible evidence:

- Delegation of authority (DoA) or decision‑rights policy that defines authority levels (e.g. Board, CEO, Executive, BU Head, Manager) with financial or risk thresholds and decision types for each level.[2][3]

- Decision‑making and escalation matrix that shows: decision categories, approval roles, escalation levels, and routing rules for cross‑functional or high‑risk decisions.[4]

- Standard decision framework or methodology (e.g. decision trees, criteria‑based scoring, risk/benefit assessments) documented in procedures, playbooks, or methodology guides.[5][1]

- Process maps including decision points, approval steps, and escalation paths for key workflows (projects, change control, product, customer exceptions, etc.).[6][3]

- Defined quality metrics and KPIs that explicitly include “decision reversal rate”, “rework rate”, or similar, including how rework is defined and calculated (e.g. rework rate = effort on rework ÷ total effort × 100).[7][8]

Notes that support an “Exists” rating:

- Framework is version‑controlled, approved by appropriate governance body, and accessible on the intranet or QMS.

- Definitions are explicit: what counts as a decision, what counts as a reversal, and what counts as rework versus scope change.[9]

- Escalation criteria are risk‑ and value‑based (e.g. dollar thresholds, customer impact, safety, compliance), not just “ask your manager”.[2][4]

E2 – Enabled (trained and supported)

Here you want evidence that decision‑makers understand the framework and have practical support to apply it consistently.

Possible evidence:

- Training materials and records: slides, e‑learning modules, and attendance logs for decision‑making, delegation of authority, and escalation training targeted at managers and leaders.[10][11]

- Induction/onboarding content that explains authority levels, decision criteria, escalation paths, and how to use the framework in day‑to‑day work.[1]

- Coaching, workshops or clinics where managers practise applying the framework to real cases and receive feedback (e.g. “decision workshops” or “governance clinics”).[11][5]

- Job descriptions and performance criteria for leaders that reference decision‑making quality, appropriate escalation, and adherence to the framework.[3]

- Decision tools embedded in systems: workflow or approval systems that enforce authority thresholds, require justification fields, and route escalations automatically according to the matrix.[6][3]

- Guidance artefacts: quick‑reference guides, checklists, decision templates (with sections for options, criteria, risks, and rationale) that are used in steering committees or project boards.[3][1]

Notes that support an “Enabled” rating:

- Training covers both the mindset (ownership, bias awareness, escalation discipline) and the skillset (using criteria, assessing risks, documenting rationale).[10]

- Decision‑makers can accurately describe their decision authority, when to escalate, and how rework and reversals are tracked, when interviewed.

- People analytics or surveys show reasonable confidence in decision rights and clarity of who decides what.[1]

E3 – Executed (low reversals/rework in practice)

For E3 you need evidence that decisions are made once, largely stick, and do not frequently need to be re‑decided or reworked.

Define and measure:

- A clear operational definition of “decision reversal” (e.g. an approved decision later overturned or materially changed) and “decision rework” (effort spent revisiting decisions previously marked complete).[9][8]

- A standard metric such as:

- Decision reversal rate = number of reversed decisions ÷ total decisions in period.

- Decision‑related rework rate = effort on rework caused by poor/changed decisions ÷ total effort.[7][8]

Possible evidence:

- Trend reports or dashboards showing stable or improving decision reversal/rework rates over several periods, ideally segmented by function, project type or decision level.[8][6]

- Project and change logs showing relatively few instances where major scope, investment, or policy decisions are reversed after approval, and where reversals occur they are linked to new information rather than avoidable errors.

- Issue and incident analyses that quantify rework effort and identify root causes, with only a small proportion attributed to “poor initial decision” or “unclear authority/escalation”.[13][6]

- Stage‑gate or governance records where decisions are clearly documented once with rationale, and subsequent reviews focus on progress, not re‑arguing the same decision.

- Retrospectives or lessons‑learned reports noting decreasing rework or fewer “churn” cycles due to indecision or frequent direction changes.[12][6]

- Audit or compliance reviews confirming that approvals align with authority thresholds and that exceptions/escalations follow the defined matrix.[3]

Notes that support an “Executed” rating:

- Data shows rework and reversals are the exception, not the norm (e.g. a relatively low rework rate compared to internal targets or industry norms).[8][7]

- Where significant rework or reversal occurs, documented root causes are external (new regulation, major market change) rather than controllable factors (unclear criteria, wrong approver, skipped escalation).

- Managers and teams report fewer “back‑and‑forth” approvals, less churn, and shorter decision cycle times as the framework matures.[1][3]

How to use this in an assessment

When performing the assessment, you could:

- Map your actual artefacts and data against the examples above and note any gaps.

- Interview a sample of decision‑makers to confirm understanding and observe whether their behaviours match the documented framework.

- Review a handful of recent major decisions (e.g. projects, investments, policy changes) and trace: where the decision was made, whether it aligned with authority thresholds, and whether it was later reversed or required substantial rework.

These evidence types, combined with notes on quality and consistency, will strongly support your rating for each level (E1, E2, E3) against the “decision reversal or rework rate” metric.

Sources

[1] Clear Boundaries Create Freedom: Delegation Authority Framework … https://morganhr.com/blog/why-clear-boundaries-create-true-freedom-the-delegation-of-authority-framework-that-transforms-decision-making/

[2] Decision-Making & Escalation Matrix Policy https://bsinve.com/wp-content/uploads/2025/07/Decision-Making-Escalation-Matrix.pdf

[3] Getting organizational decision making right | Deloitte Insights https://www.deloitte.com/us/en/insights/topics/talent/organizational-decision-making.html

[4] Mastering Approval Role Escalation Hierarchies For … https://www.myshyft.com/blog/escalation-hierarchy-setup/

[5] Decision Frameworks | Decision Modeling and Analysis | Decision … http://www.decisionframeworks.com

[6] Rework rate in IT: impact and solutions – OGI Digital https://ogi.digital/insights/rework-rate-in-it-impact-and-solutions/

[7] Are You Tracking Rework? What It Is & How to Quantify It https://www.cfodynamics.com.au/blog/tracking-rework/

[8] Rework Rate https://www.minware.com/guide/metrics/rework-rate

[9] Rework Rate: Definition, Examples & Best Practices (2025) – Docsie https://www.docsie.io/blog/glossary/rework-rate/

[10] Training – Decision Analysis Course https://decisionframeworks.com/training

[11] Decision Making Training – LSA Global https://lsaglobal.com/decision-making-training/

[12] Measure rework & waste – KEDEHub Documentation https://docs.kedehub.io/kede/kede-rework-waste.html

[13] Project Successf from Rework Cycle after Launch | PMI https://www.pmi.org/learning/library/project-success-rework-cycle-launch-5008

[14] A decision-making model for the rework of defective products https://www.emerald.com/ijqrm/article/38/1/68/145665/A-decision-making-model-for-the-rework-of

[15] Rework vs Scrap: A Balanced Approach to Decision Making in … https://www.prpquality-us.com/post/rework-vs-scrap-a-balanced-approach-to-decision-making-in-manufacturing